Music Notation: Languages Gained, Lost, Remembered, Reclaimed

- Dec 22, 2018

- 19 min read

Herbert Brün (1918 - 2000), pioneering composer of electronic and computerized music, returned many times to the unassuming phrase, “A language gained is a language lost,” [1] in writings and speeches throughout his latter career at the University of Illinois. The history and concern of notation of any kind is provocatively summed in this quotation, as musical notation is itself a cypher for the communication of a language, and each version of notation that has been created throughout Western music history can simply be understood as representing a living or historical dialect of this language’s development; with each successive layer of evolution, a new aspect to its communication is gained, while at the same time something must be lost from its previous incarnation. Just as one can draw a continuous line from Anglo-Saxon or Old English, from over 1000 years ago, to Middle English and then to Modern English, interweave the one thread of English into the branching network of closely and more distally related dialects of Celtic languages (Irish, Gaelic, Manx, Welsh, Cornish, Breton), trace creative hybrid variations of modern English dialects migrating around the globe today (American, Australian, Indian, South African), so too can we understand the development of Western music notation as a branching tree of languages and dialects, some highly and some distantly related, some dead and some alive, some on the brink of extinction, but each with evolutionary advantages and disadvantages particular to itself.

By tracing the evolution of these musico-genealogical characteristics, we could, as biologists can do today with animals, trace the record of survival traits and functional necessities and frivolities in this “living system” of musical communication. In this way, the death of notations, the mutation of new advantageous (and perhaps ultimately disadvantageous) traits in that notation, and the grafting of external tools to make more efficacious the imprinting of sonic meaning all stand as a record of musical thought, aesthetic, and function as incubated and tested in the crucible of history, filled with changing and challenging environmental systems: political, aesthetic, theological, philosophical, epidemiological, etc.

In modern musical education, we are often sheltered from the somewhat tumultuous sea on which our system of notation floats; at any instance, a wind – perhaps whimsical, perhaps prophetic – might push our ship off the course we surely believed we were on. Many young students of music are not even aware (often as a consequence of their teacher’s similar lack of awareness) that the system of notation and the systems communicated through this notation are highly arbitrary and not actually that old, only coming into the full-flowering of their modern version but about a century ago. [2] Even in the 19th century, dialects of Western music, which had been communicated for over a millennium via notated and un-notated oral traditions were coming under siege by modern demands to “evolve.”

Canntaireachd (pronounced more-or-less as “can’t-are-rock;” Scottish Gaelic for “chanting”) was the ancient form of musical “notation” used in the Scottish Highlands to communicate the traditional “high-art” bagpipe music of medieval Scotland, Piobaireachd (also spelled Pibroch, both pronounced more-or-less as “pea-oh-brahk,” meaning “bagpiping”), also commonly known as Ceol Mòr (“big music”). While most of Western Europe’s high-art scene fell to the aesthetic gauntlet of harmonically tertian-based polyphony by the 15th century, this form of monophonic composition, first appearing in records between the 9th and 11th centuries, persisted under continuous practice and development to the Early Modern Age. [3] Piobaireachd are long and relatively complex works in the form of theme-and-variations and were traditionally transmitted orally by way of Canntaireachd, which is a systemized collection of abstract vocalized syllables, which communicate not only the pitch of particular notes but also the intricate embellishments and irregular rhythmic nuances of the music. [4]

Figure 1a, 1b, 1c, 1d: Top, the canntaireachd for the well-known (and my favorite) piobaireachd “Maol Donn” (Brown Cow), also known as “MacCrimmon’s Sweetheart” from Volume I of Colin Campbell’s manuscript. "Nether Lorn" from 1797. Middle, the "modern" re-notation of the above canntaireachd. Bottom-left, a video from YouTube of the performance of the canntaireachd. Bottom-right, a recording from YouTube of the whole piobaireachd on bagpipes.

By the 19th century, industrialized Europe with all of its developments in musical communication was reaching Scotland’s Highlands. Consequently, this antiquated form of musical encoding began to be challenged by the seemingly streamlined and cross-cultural powers of Western Europe’s preeminent form of musical notation (the system of 5-lines, 4-spaces, black and while circles with various stems, and rhythmic hierarchies well-known today). [5] At this time, the Piobaireachd Society proposed a competition to develop a new way to notate traditional bagpipe music that would reflect modern music notation. Many proposals were made, but Angus MacKay’s [6] version using the standard five-line-four-space staff was the winner and ultimately authorized and published by the Piobaireachd Society. Many at this time and even scholars to the present day consider this re-notation oversimplified, with inaccurate standardizations of rhythm and time and the over-editing and full removal of exceptional and complicated embellishments, which were present in notated Canntaireachd. [7] While medieval bagpipe music gained a powerful language of cross-cultural and cross-instrumental communication (able to be read, understood, and appreciated by anyone with a modicum of musical training), there was an ultimate sacrifice that had to be made to conform to the standard system. In this adoption of a new notation of language, part of the language’s original character was lost.

While many music students might be familiar with the history of Gregorian chant notation in the West, and how this language has “evolved,” perhaps “gaining clarity” while loosing its original character along its way from the 9th century to the Renaissance and into the Modern age, such a phenomenon is not exclusive to the distant past or to distant, non-Western, “world musics.” Even in the 19th, 20th, and 21st century, these lingual compromises are necessitated for one reason or another. The “progress” of musical notation clearly gives us new and powerful tools, but by forgetting its past forms, we loose particular nuances of communication, which themselves might at some point be of greater utility than the newer tool. William Donaldson, in The Highland Pipe and Scottish Society 1750-1950, highlights this problem with the adoption of Western notation to Scotland’s traditional bagpipe music:

In its written form, canntaireachd provided the basis of the indigenous notational system and it was brought to its most developed form… at the end of the 18th and the beginning of the 19th Century. Although [Canntaireachd] was almost immediately superseded by a form of staff notation adapted specifically for the [Great Highland Bagpipe], and remained unpublished and unrecognised until well into the 20th Century, it remains an important achievement and gives valuable insight into the musical organisation of Ceòl Mór. [8]

This independent development of musical notation is certainly an “important achievement” for the Scottish people, which can give any musicologist insight into the musical lives, philosophies, and aesthetics of Scotland. With the complete extinction of this language, we might loose relatively little in our capacity to write music as a human species, but we would certainly loose at least one tool to communicate in at least one manner, which is not afforded to us through our current standard system. Such is the nature of the evolution of any language, be it lingual, musical, or technological: have a floppy disc with some interesting or perhaps significantly important information on it, a modern disc drive will never help you to understand or recover it – with but the passing of a magnet, it is lost forever.

Many musicologists have made meaningful careers from being the musical equivalent of floppy disc readers (if not beeswax cylinder readers) in our modern day. One might ask, why do you still need such antiquated means of data encryption and retrieval? The reality is that we still have much musical data encrypted in relatively extinct forms of musical notation, many of which cannot be fully unencrypted, re-encrypted, and then reproduced under modern musical-data encryption systems (the five-line-four-space staff with a handful of time-signatures we know so well). While I do not attempt to belittle or problematize our modern standard system of musical language encryption, I do hope to shed light on some of the powers and limitations it has bequeathed us while also showing some of the advantages older and “newer” systems might return to us should we familiarize ourselves with them.

Perhaps one of the first truly awe-inspiring innovations our system of Western notation evolved was the ability to demarcate time. Beginning with the long period between the fall of the Roman Empire and the eventual rise of the Holy Roman Empire under Charlemagne, Western music as we know it was un-notated. The first pitch notation of any kind [9] appeared with a variety of symbols, called neumes, scribbled above words to be sung in religious services (particularly Christian) sometime in the centuries before and during the reign of Charlemagne (see Figure 2). During the reign of Charles the Great, governmental efforts were made to standardize this liturgical chant in order to unify a suddenly and arbitrarily created empire (The Holy Roman Empire). Charlemagne believed that by unifying the liturgy under one chant and one standard text, he could bring some non-arbitrary unification to his awarded empire. He realized that to do this effectively he could not rely on orally transmitted music and text, which was the primary form of musical transmission at that time. Thus, to more rapidly and assuredly unity people under one musical liturgy, this music should be written down such that the human mind would be less likely to accidentally "corrupt" (vary) it from region to region. Such needs soon brought a system of pitch notation to help insure the fidelity of the reproduced chant, no longer safeguarded only in the memories of cathedral choirmasters and cantors.

Consequently, the form of chant notation, often called “square notation,” evolved, particularly under the innovations of Guido of Arezzo, to effectively communicate the pitch content of any melodic line of music (see Figure 3). However, it did not communicate any aspect of rhythmic performance, if there was any aspect as such at all. [10]

Figure 2: Neumatic notation from the early Middle Ages. Gestural shapes written above the text to be sung. These shaped gestures were merely a reminder to the singer of the shape of the chant tune (the melody goes up, down, zig-zigs, etc.). This system predicated itself on the fact that the singer would already know the chant, having learned it from a teacher and memorized it. This system gave no precise indication of pitch or rhythm.

Figure 3: Square notation of chant, which now gives a clear reproduction of pitch content, the four lines and three spaces being a prototype of our modern system with one additional line. The placement of a clef (usually a moveable C-clef) at the beginning of the line would tell the singer the relation of half- and whole-steps on the staff of lines.

It wouldn’t be until the 12th and 13th centuries at the School of Notre Dame in Paris that musicians developed any system of written temporal demarcation. This system, often known as “the six rhythmic modes of Notre Dame,” would be rather limited, confined to only the various three-grouping permutations of “longs” and “shorts” (one time-unit and half that same unit, or like the modern quarter and eighth note), specifically reflecting the common meters in Latin poetry (see Figure 4). [11] This system of notation was based on square-notation, but embedded in its structuring were patters of neume groupings, which indicated the rhythmic mode )see Figure 5).

Figure 5: Below is the beginning of Perotin's "Viderunt Omnes" for Christmas (a famous example of Notre Dame polyphony). Notice that this notation of music looks highly similar on its surface to the notation of the monophonic chant from Figure 3. However, this music notation does communicate rhythm, whereas the chant from Figure 3 arguably does not. You can hear this piece in the video appended below this image.

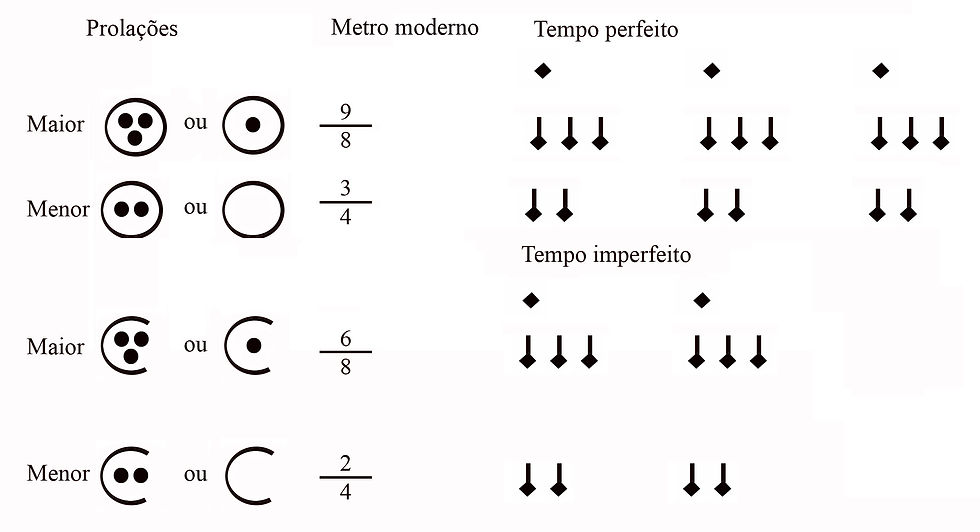

Only by the end of the 13th century, was a system of high variability for rhythm devised. This was accomplished by music theorist, Franco of Cologne (c. 1280). Through his “Franconian Notation” and its use of hierarchical rhythmic mensuration, time could be complexly divided and subdivided into various strata, rather than only having polyphonic texture in the same temporal layer siting in one “mode” of that level or another. Through the creation of rhythmic notation and the subsequent creation of a hierarchical system of rhythmic expression, one could organize musical data like never before, creating numerous strata of musical information. Naturally, one of the first musical forms that developed from this innovation was predicated on such informational stratification: the isorhythmic motet (see Figure 6 and 7). [12]

Figure 6: The basic system of Franconian Mensural Notation. Given the mensural sign to the left (the prototype of our modern "time signature"), the performer could ascertain the hierarchy of rhythmic values given by longer notes (breves) and shorter notes (semi-breves). These groups could be subdivided variously into groups of two and three, giving far more variety of rhythmic development. Furthermore, different voices could be under different mensurations at the same time, allowing some voices to move at slower rates and while some move at faster rates.

Figure 7: Below is a video and transcription of an isorhythmic motet from the "Mass of Tournai" from the 14th century. Notice how the lower voices is moving at a much slower pace than the middle voice, which itself is moving at a pace both faster than the lower voice but slower than the top voice. Franconian Notation allowed composers to notate the stratification of musical lines for the first time.

Idiomatic to this isorhythmic style was the stark independence of each musical stratum. Rather than all voices having equal rates of temporal progression, voices would be intentionally set at three different rates, or mensurations. As music would continue to develop into the 14th, 15th, and 16th centuries, this rhythmic stratification would continually decrease and return to relative equality, albeit with greater rhythmic variety than found in the 13th century Notre Dame School. While the early 15th century would see the popularity of Cantus Firmus masses and motets, which utilizes a similar form of rhythmic stratification, this would decline in popularity over this century, and ultimately loose favor in the 16th century, only resurfacing in the 17th and 18th centuries, in some, mostly German, contrapuntal organ and choral works, such as in Johann Pachelbel’s and Georg Bohm’s chorale fantasias for the organ and Johann Sebastian Bach’s later cantus firmus based organ works and vocal chorale fantasias, as found in his cantatas BWV-4 and BWV-80.

In the 20th century, such stark rhythmic stratification has returned prominently in the experimental music of the rhythmic avant-garde. Composers like Olivier Messiaen, in pieces like Visions de la Amen, and Conlon Nancarrow, in works like Study No. 17 for player piano, returned to the idea of measured or “mensural” rhythmic stratification (see Figure 8 and 9). Our modern system of musical notation, while allowing for such extreme rhythmic layering, does not suggest it obviously, generally requiring all instruments within a work to share the same time signature and barlines. Furthermore, most conventional uses of our modern system require barlines at all times, and these barlines should typically be relatively short, usually 2 to 7 beats long with 7 being rather exceptional. It is nothing new to note, but an understanding of a system of music that operates without barlines, under stratified rather than homogenous rhythmic hierarchies would allow for different modes of music composition, as demonstrated in the works of the two above composers as well as Karlheinz Stockhausen, John Cage, George Crumb, and many others. Many young music students, so arbitrarily entrenched in the modern metric system, are totally ignorant of these possibilities.

Figure 8: Messiaen's "Vision de la Amen" (see my post on this piece for further information on its isorhythmic structuring.

Figure 9: Nancarrow's "Study No. 17" for player piano, which has three musical strata in canon under the temporal proportions 12/15/20, realized as the three contrasting tempos of 138, 172.5. and 230 beats per minute. The three musical lines contain all the same pitch and rhythmic content (albeit different octave transpositions), however each voice is moving at a different rate according to the three above tempos.

The second profound development in Western music notation, which has perhaps given us more than it has taken, is the proliferation of “score” notation, that is, the notation of all polyphonic parts in music such that they all visually appear meaningfully juxtaposed (usually in a vertical list) according to their progression over time. Typically, this “meaningful juxtaposition” is the precise, concordant, periodic ordering of their rhythmic events from the shortest to the longest durations within any part. By developing this tool, composers were allowing themselves more complex methods to organize their ideas as well as communicate more information and more complex information to the performer, theorist, and eventually ensemble coordinator (conductor). Before score notation, it was more difficult, given only the independently notated polyphonic parts of a piece, to determine how one part would fit precisely with another and what the combination of these parts would ultimately communicate. With score notation, it became much easier to a priori internally audiate and understand a piece of music before it was ever unencrypted by performers in performance or rehearsal. (see Figure 10 and 11)

Figure 10: Below is the original manuscript of Alexander Agricola's (1457/58 - 1506) "Agnus Dei" from his "Missa in Myn Zyn." Here you can see the Soprano voice's part on the top of the left page, the "alto" (tenor 1) voice on the bottom of the left page, the "tenor" (tenor 2) on the top right, and the bass on the bottom right. While this edition is fine for performances purposes, it would be difficult to select any random note from one part and know immediately to which notes it corresponds in the other parts, since these parts are not temporally synchronized in this notation (e.g. the first note in the second line of the soprano does not necessarily correspond to the first note in the second line of any of the other parts).

Figure 11: Below is a video transcription with recorded performance of the above music manuscript. With the "score notation" it is much easier to see how each part harmonically and rhythmically fits together at any one moment.

There is no specific “light-bulb” moment for this innovation; no particular person is easily given the sole credit. Such notation in various forms appears in isolation throughout the Middle Ages and Renaissance, especially in theoretical treatises, [13] which perhaps realized the pedagogical utility of such a presentation of information. However, when only performance is the aim of musical data, it is understandable that the composer or music publisher only concern themselves with writing or printing performance parts. If one were to look at music from the Renaissance during the mid to late 16th century, bar lines do begin to appear relatively frequently in some sources; one notable source that uses bar lines and “score notation” is the Baldwin Manuscript, produced in Elizabethan England during the last decade of the 16th century (see Figure 12). As larger and more varied ensembles begin to emerge at the dawn of the Baroque, barlines and score notation begin to become more ubiquitous across Europe due to difficulties with and the necessity of ensemble coordination. Perhaps no other innovation in notation, barring pitch and rhythmic notation, was more influential in fostering the prolific complexification of Western music between the 17th and 21st centuries. [14] The score afforded greater and greater levels of coordination among larger and larger ensembles.

Figure 12: The first musical piece from the Baldwin Manuscript ("Baldwin's Commonplace Book"), which shows the beginning of a five-voiced motet in score notation with barlines. Here, you see two "systems" of the score (two macro-lines of five-parted music; the first five staves being sung before the second macro-group of five staves below). This fashion of score notation continues throughout most of the book.

What might be worth taking from this innovation, however, are the benefits of not writing music with a score. If the score affords coordination, then it might actually be a hindering tool if one’s goal in composition is non-coordination. By feeling compelled to produce a score, even in the cases of purely electronic music, [15] one might ultimately be subject to purely irrelevant musical dimensions that encumber their present creative process. While we should recognize the power of the score, it might also be important to recognize its relative infancy and the limitations it places upon us in our ability to communicate certain ideas. For example, if Conlon Nancarrow had required himself and his music to serve the limitations of the score, the “notation” of a piece like Study No. 40a (canon e/pi) or Study No. 33 (canon 2:sqrt(2)) would have been impossible since the polyphonic parts of these pieces essentially never coincide in time. If the score is predicated on coincidences in time, any piece predicated on the lack of such coincidence cannot rely on the score as traditionally understood. (see Figure 13)

Figure 13: Nancarrow's "Study No 33" for player piano, which contains two musical strata at two different tempos in the ratio of 2 against the square-root of 2. Since the square-root of two is an irrational number, this tempo is simply an approximation, but essentially these two musical strata should theoretically never coincide in time, since 2 cannot be equally divided by the square-root of 2. This goes against all conventions of score notation, making any conventional score notation somewhat absurdist. Here, as designed, this piece is realized by a player piano, since a human performance is extremely difficult, if not impossible, if the piece is to be at all a close approximation.

These innovations in pitch, rhythm, and time notations, perhaps ironically, lead to the third and last innovation in musical communication I will address herein: non-prescriptive notation. Since the precision innovations of the score suggested the limitations it places on our ability to express free and incommensurate musics, the next innovation I wish to address is the return to non-prescriptive systems of musical notation. Thus far, this essay has been entirely concerned with the development of greater and greater levels of prescriptiveness in musical encryption. Perhaps 1000 years has finally lead us full circle in the 20th and 21st centuries, as some composers have realized that the representation of some sonic ideas cannot be encoded with pitch-rhythm-time prescriptions.

In the 20th century, composers, perhaps realizing many of the issues addressed above, sought to eschew the millennium of prescription and return to what might be aptly called “new neumatic notation.” The 20th century witnessed many examples of the abstraction of notation, returning to “pictographic” suggestions of sound, often open to much interpretation by the performer. A stark connection can be drawn between the neumatic notation of the Middle Ages and the “new neumatic notation” found in Earle Brown’s String Quartet (1965), clearly referencing this less prescriptive musical encryption method (see Figure 14). Other examples of this return to “suggestive” rather than prescription notation include (among many others) Karlheinz Stockhausen’s Zyklus (1959), Morton Feldman’s King of Denmark (1964), John Cage’s Song Books (1970), Krzysztof Penderecki’s De natura sonoris (1966), and John Zorn’s Cobra (1984). (see Figure 15)

Figure 14: The first page from Earle Brown's "String Quartet" from 1965.

Figure 15: The first page from Morton Feldman's "King of Denmark" from 1964.

If I may, in conclusion I wish to address one further development in the encoding of music, which will, by association, return us to our beginning with Herbert Brün, master of electronic music in the 20th century. This innovation, which you might have already guessed by now, is so significant that it might even supersede all of the above innovations, for it, in itself alone, needs no form of “musical” encryption in order to deliver to us the musics that we love and study; this discovery and its consequent innovations have even the power to encode a music that has no other means of notation: the Fourier Transform and the development of the “wave file”. [16] The ability to encode music as purely mathematical summations of sine waves and the second ability to unpack that data and audiate it with as few as one vibrating membrane through the computational power of the Fourier Transformation has perhaps shaped our musical world more today than anything else. Recorded sound that can be played anywhere at any time given the audio equipment necessary – which only becomes smaller and better with each successive year – has been profoundly transformative in how we hear, learn, understand, and use music. In a beautifully abstract way – or perhaps profoundly concrete way – the wave file is the most precise manner to encode musical meaning. It is so universally applicable and mathematically fundamental, that we have felt comfortable sending such musical artifacts to the stars in hopes that perhaps an alien race or a distant future version of ourselves might find it floating in the outer emptiness around our star (see Figure 16). This manner of sonic encryption is so powerful, so precise, so fundamental to the function of physics and our universe that we feel confident that the modest handful of wave files precisely engraved to the posterity of perhaps hundreds of billions of years on a golden disc, [17] if found by any intelligent life form, will communicate, in some fashion, the intellect of us who encoded it and our noble and urgent search for meaning in millennia of wave summation.

Figure 16: The Kepler Golden Record, on which is encoded our lingual, musical, semiotic, and mathematical "hello" to the universe and any intelligent life form which may, in the distant future, find it floating amongst the stars.

End Notes

[1] See Brün, Herbert. When Music Resists Meaning: The Major Writings of Herbert Brün.; Smith, Stuart Saunders, and Thomas DeLio. Words and Spaces: An Anthology of Twentieth Century Musical Experiments in Language and Sonic Environments. I often personally cite Professor Paul Koonce from the University of Florida for this quotation, though I know it was never originally his; I simply recall in many classes on style and electro-acoustic music him returning to this phrase and the aesthetic, philosophical, and linguistic implications of such as idea.

[2] For example, while our “modern” system of musical notation has been present, in some form, since arguably the end of the 16th century and assuredly by the beginning of the 17th century, modern expectations of this system have only arrived in the past 100 years: the beaming of rhythmic groups no matter the instrument (see vocal music of the 19th century for example), a panoply of dynamic markings, precise tempo markings, irregular time-signatures (5/8 and the like), non-pitched percussion notation, etc.

[3] Many conjectures have been made to the Highland’s relative non-adoption of European polyphony and the persistent development of high-art monophonic music like the Piobaireachd. However, the simplest explanation is the Highland’s geographic isolation from England and Europe generally. See Key, Jordan Alexander. Piobaireachd: The Origins of the Traditional Music of the Great Highland Bagpipes of Scotland as It Relates to Early Harp Music and Liturgical Chant from the British Isles. Master's thesis, The College of Wooster, 2013. Wooster: College of Wooster, OH.

[4] Generally, vowels represent pitch (of which there are only nine) while consonants the systemized embellishments.

[5] The system of bagpipe tuning also come under challenge as well, still reflecting a just-intoned, medieval, non-equal-tempered tradition.

[6] Interestingly, one of my own ancestors, the last name Key being an American-English corruption of the clan name MacKay upon immigration to the United States.

[7] As found in the contemporaneously published Campbell Canntaireachd.

[8] Donaldson, William. The Highland Pipe and Scottish Society, 1750-1950: Transmission, Change and the Concept of Tradition. Edinburgh: John Donald, 2008. The “s” in both “unrecognised” and “organisation” are reflective of the original source.

[9] And perhaps a modest rhythmic notation, though this is still highly debatable and only theoretical.

[10] Again, this is highly debatable and there is much literature to this end.

[11] Namely, trochaic, iambic, dactylic, anapaestic, spondaic, and tribrachic.

[12] Prominent composers of this style of music include many anonymous composers of the Ars Nova Notre Dame and Tournai Cathedral School as well as Philippe de Vitry (fl. c. 1314), Bernard de Cluny (fl. c. 1360), and Guillaume de Machaut (c. 1300 – 1370).

[13] Score-like notation appears as early as the Musica enchiriadis and Scholia enchiriadis (c. 850 CE).

[14] This does not suppose the “rise to supremacy” of Western music over other forms of music from around the globe, or hope to imply that Western music is intrinsically better than other musics. It only goes to suggest that precise coordination in time of, say, 1000 performers, such as one might hear in Mahler’s 8th Symphony, was certainly aided by the ability to precisely notate massively polyphonic musical information in accord to temporal delineations. Without such an innovation in notation, highly complex musics requiring great rhythmic precision among many performers, such as in Elliott Carter’s Double Concerto, might have not been feasible.

[15] Such as practice was popular at the beginning of electronic music’s history. Given the historical importance to a composer’s posterity and the expectation from the musical public to “see a score” which embodied the composer’s process and vision, some electronic piece composed with not need for a performer were still notated (as best as they could be) with a score, essentially serving the purpose of Augenmusik in its purest form. Composer’s who participated in this “Augenmusik” process include Karlheinz Stockhausen and Conlon Nancarrow, that latter of whom, composing almost exclusively for the player piano, had no technical need for a score but was asked to create such artifacts for posterity, publication, and theoretical study.

[16] Many music have also arises that only concern themselves with the creation of music as wave files, removed in all ways from biological audiation except for perhaps the a priori composition of the piece in the human mind - and even some attempt to remove this aspect with artificially intelligent composers. Some composers who have been made this their compositional focus, to greater and lesser extents, include Herbert Brün, Milton Babbitt, Karlheinz Stockhausen, Paul Lansky, and Paul Koonce, among many others.

[17] The Kepler Golden Record

Works Cited

Brün, Herbert. When Music Resists Meaning: The Major Writings of Herbert Brün. Middletown, Conn: Wesleyan University Press, 2004

_____. "On Anticommunication." In Words and Spaces: An Anthology of Twentieth Century Musical Experiments in Language and Sonic Environments, ed. Stuart Saunders Smith, 33-48. Rowman & Littlefield, MD: University Press of America, 1989.

Key, Jordan Alexander. Piobaireachd: The Origins of the Traditional Music of the Great Highland Bagpipes of Scotland as It Relates to Early Harp Music and Liturgical Chant from the British Isles. Master's thesis, The College of Wooster, 2013. Wooster: College of Wooster, OH.

Comments